Neuton.AI presented In-Sensor AI at MEMS & Sensors Executive Congress 2023

We had the pleasure of presenting the In-Sensor AI concept to the audience at the SEMI MEMS & Sensors Executive Congress 2023. The In-Sensor-AI technology is an innovative solution that enables edge and always on devices with limited resources and battery life to operate more efficiently. This technology processes significant amounts of data directly on the sensor and sends only the relevant data to the main MCU, resulting in a noteworthy reduction in battery consumption. The implementation of this technology became possible thanks to two key factors: programmable sensors and neural frameworks that enable the creation of ultra-small neural networks.

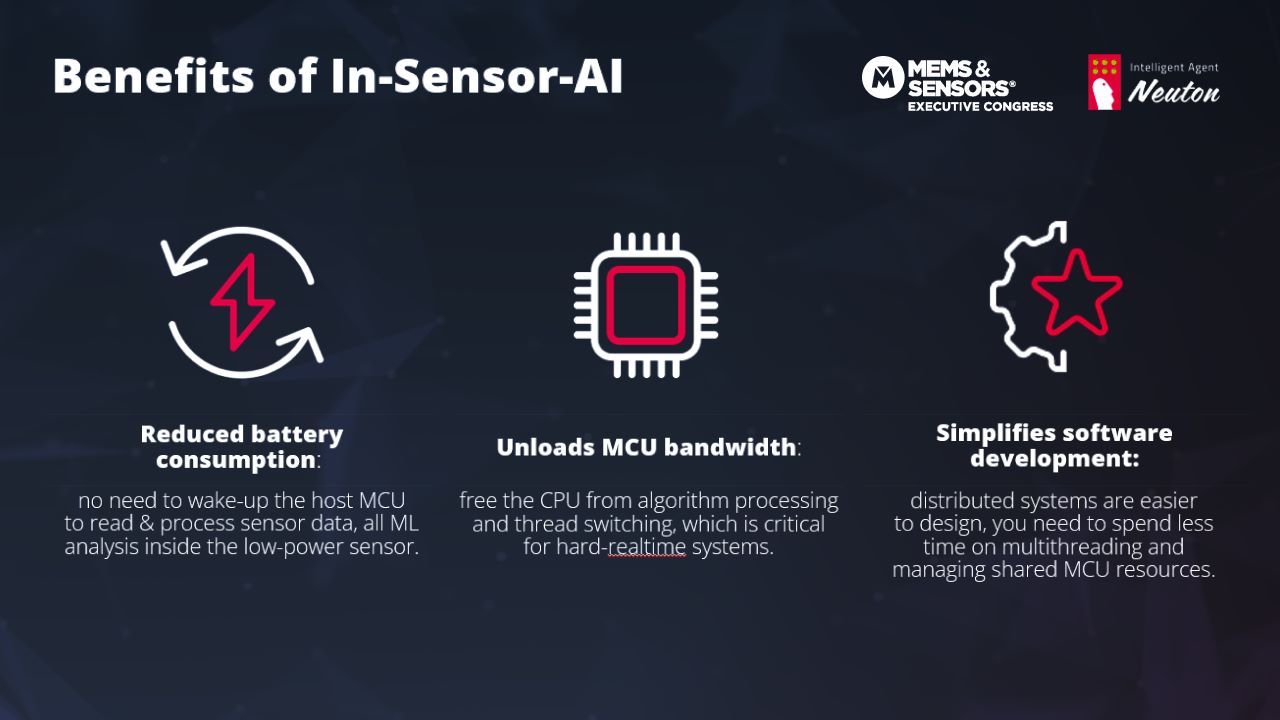

The In-Sensor-AI architecture optimizes the energy consumption of the entire system. Instead of sending raw data from the sensor to the MCU, the processed data is sent, allowing the MCU to remain in sleep mode, and there is no need to wake the host MCU to read and process sensor data. All machine learning analysis is performed inside the low-power sensor. This unloads the MCU bandwidth by freeing up the CPU from algorithm processing and thread switching, which is critical for hard real-time systems. Additionally, it simplifies software development: distributed systems are easier to design, you need to spend less time on multithreading and managing shared MCU resources.

During the session, our CTO, Blair Newman, showcased the work of Neuton.AI's TinyML models embedded into the STMicroelectronics LSM6DSO16IS Intelligent Sensor Processing Unit (ISPU) and Bosch Sensortec BHI380, a programmable IMU-based AI sensor system. We presented 5 different use cases of In-Sensor-AI tech:

On-device Package Tracking solution tracks if the parcel has been shaken, has fallen or is being held in the wrong orientation, is being transported by hand via courier, driven by car, or not in transit at all, and lying on the surface.

Smart ring as a Remote Control enables you to control your TV by gestures. The model can recognize up to 7 gestures, including swiping right/left to go to the next/previous channel, tapping to play or pause, rotating clockwise/counterclockwise to increase/decrease the volume, and identifying idle and unknown gestures.

Teeth-brushing tracking solution works directly on the toothbrush. Our solution identifies 15 zones of the oral cavity in real-time to ensure that we brush all areas of our mouths regularly.

Touch-free solution Interaction for smartwatches allows for various gestures to interact with smartwatches, including but not limited to movements such as raised hand wrist up (activation), pinch (forward), double pinch (back), clench (enter), and double clench (exit).

Daily Human Activities Recognition of complex and similar human actions directly on the ISPU. It can be applied to many use cases such as elderly care monitoring, tracking hand-washing for children, etc.